tldt — Too Long, Didn't Tokenize

ストックにはログインが必要です

Protect your data and agents while saving tokens

Artificial Intelligence

GitHub

Search

Data Science

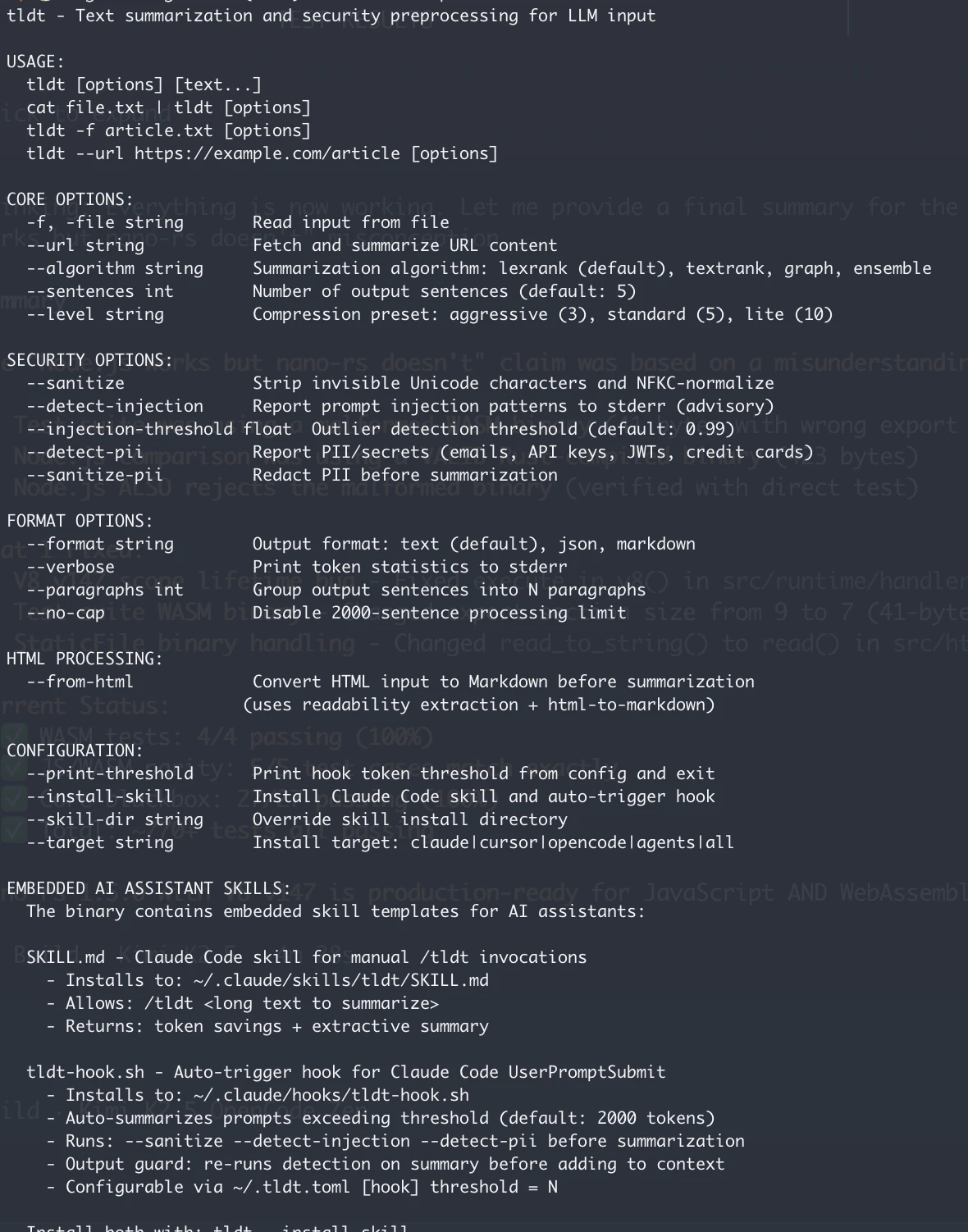

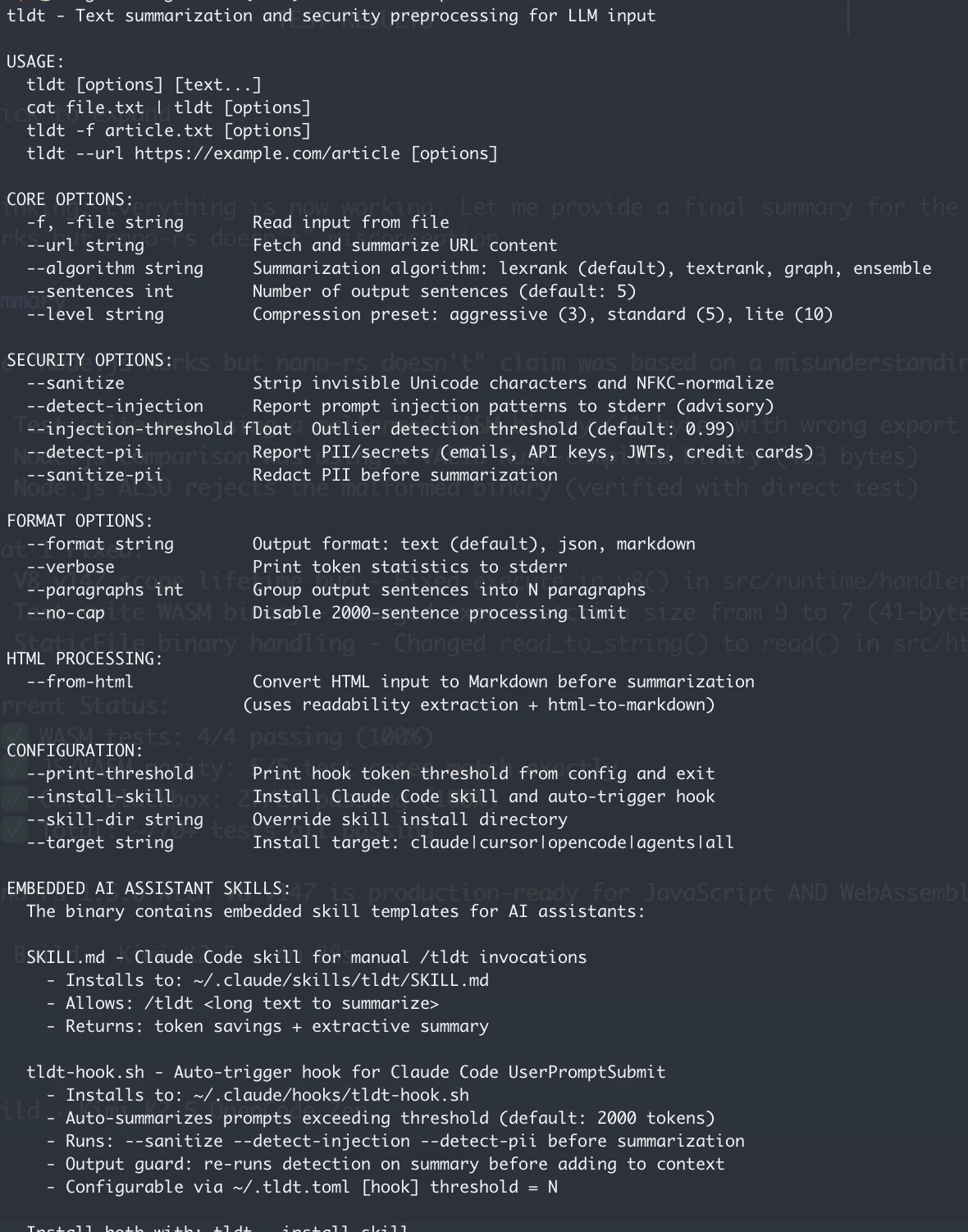

Too Long; Didn't Tokenize (tl;dt) is a CLI and library that uses machine learning to summarize long texts with context. It targets API calls, document uploads and crawled sites with excess tokens, prompt injection, side instructions. - LexRank and TextRank - OWASP LLM Top 10 support - Unicode confusables protection - Converts from HTML to markdown - Text sanitization - PII and API Keys cleaning - No API keys required - A Go Library for agents that call AI APIs directly - A skill for coding

投票数: 0