APIEval-20

ストックにはログインが必要です

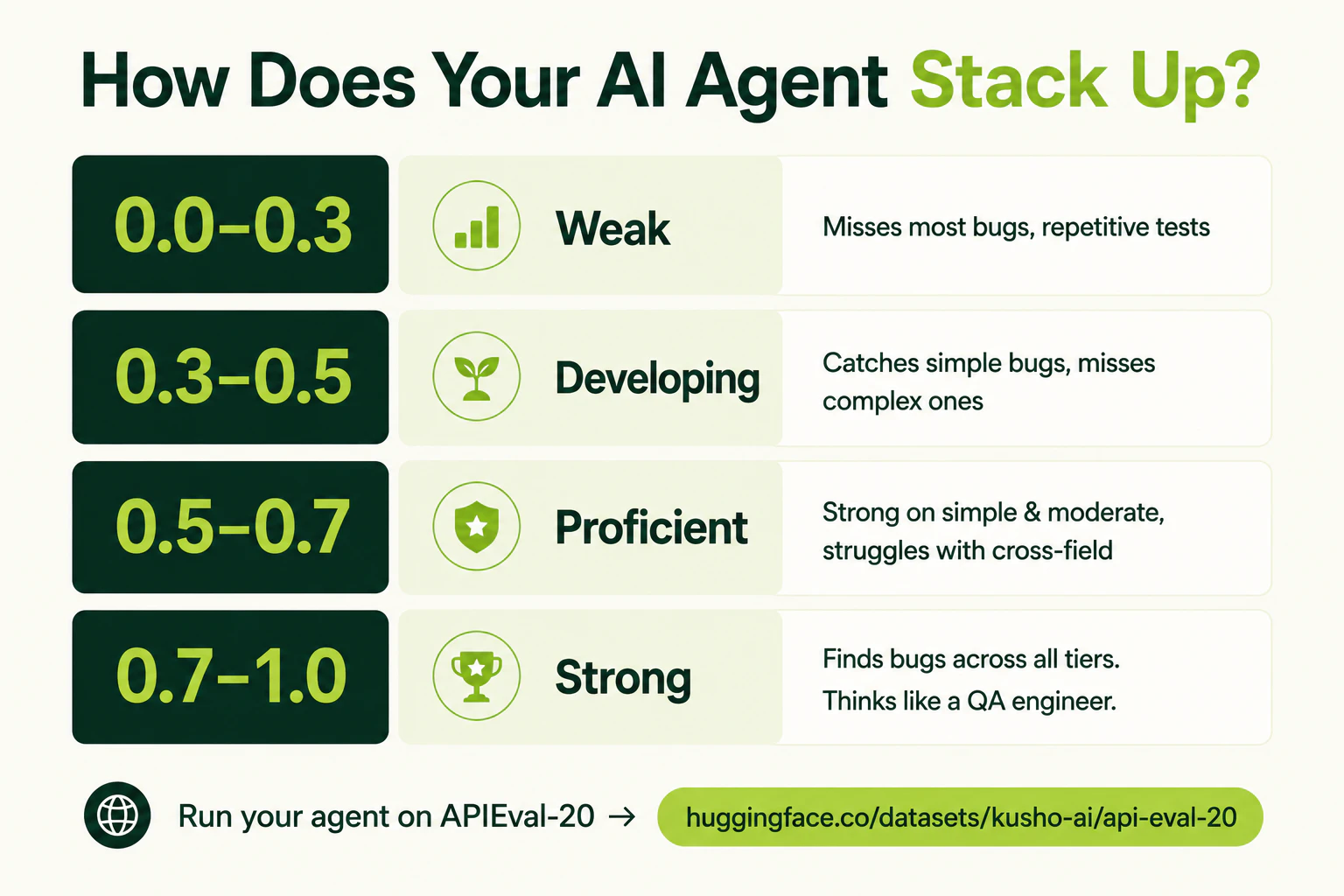

An open benchmark for AI agents that test APIs

Artificial Intelligence

Developer Tools

API

APIEval-20 is a black-box benchmark for API testing agents. Each agent gets only a JSON schema and one sample payload, then generates a test suite. We run those tests against live reference APIs with planted bugs and score bug detection, API coverage, and efficiency. Unlike LLM-as-judge evals, scoring is fully objective: a bug is either caught or it isn’t. Tasks span auth, errors, pagination, schemas, and multi-step flows. Open on Hugging Face.

投票数: 0