Ollama v0.19

ストックにはログインが必要です

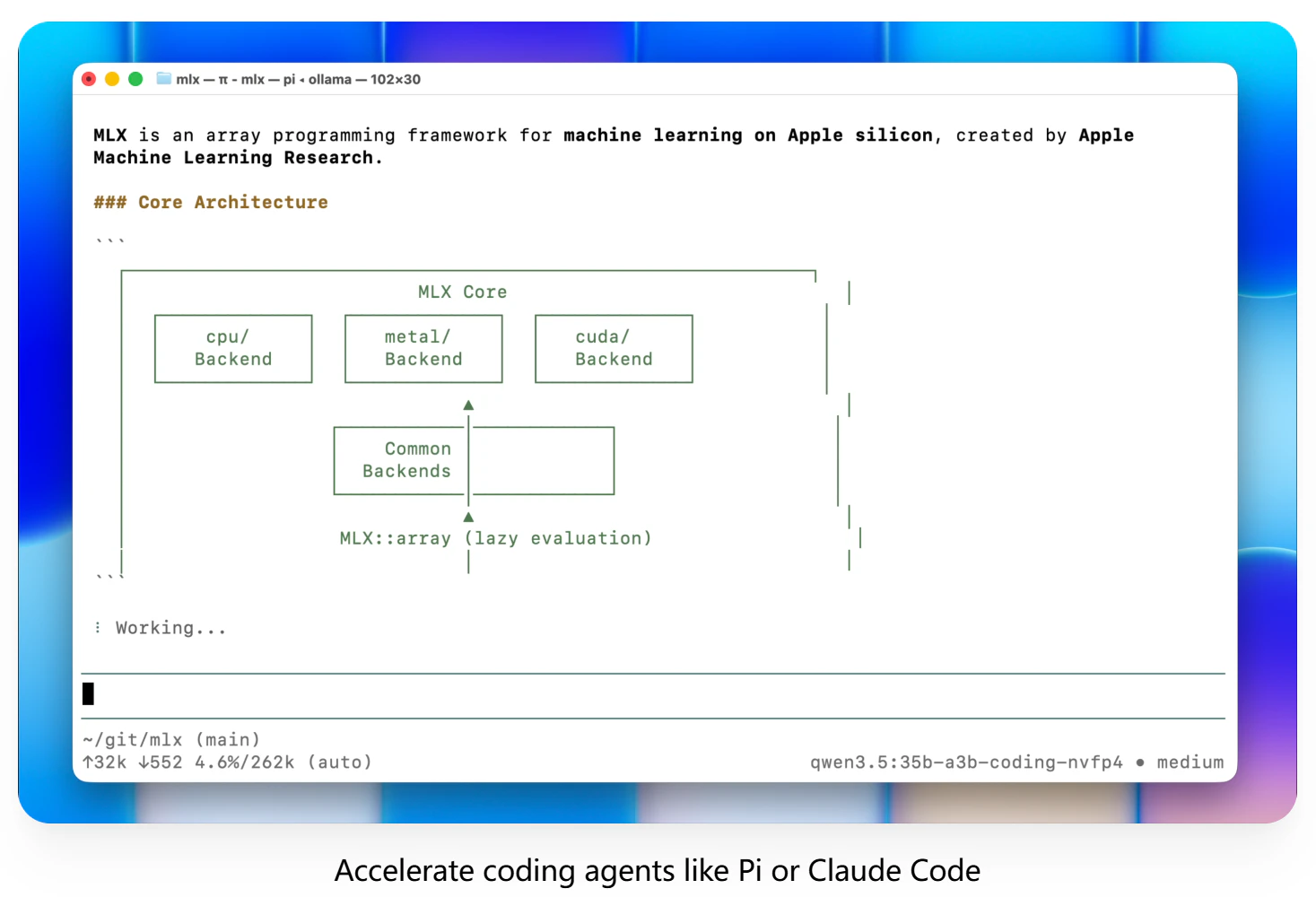

Massive local model speedup on Apple Silicon with MLX

Artificial Intelligence

GitHub

Open Source

Apple

Ollama v0.19 rebuilds Apple Silicon inference on top of MLX, bringing much faster local performance for coding and agent workflows. It also adds NVFP4 support and smarter cache reuse, snapshots, and eviction for more responsive sessions.

投票数: 360